4 min read

Understanding Tokenization in Banking and Finance

Martin Rupp (guest)

:

modified on 24. July 2020

Martin Rupp (guest)

:

modified on 24. July 2020

- Home >

- Understanding Tokenization in Banking and Finance

Tokenization is a generalized concept of a cryptographic hash. It means representing something by a symbol (‘token’).

A social security number symbolises a citizen, a bank account number represents a user's bank account, a labelled plastic token represents real money deposited at the casino's cashier, etc.

Tokens in cryptography represent confidential information. Its token does not reveal useful information. Cryptographic hashes, one-way functions with the lowest chance that two bits of information have the same token representation, are usually the same as cryptographic tokens.

Most programmers and sysadmins know (or should know) that passwords are usually represented by tokens on the machines. For example, an old machine will not store the password “Belinda@112” but rather the token:

eP7L76Ad526e6

as a result of a DES (Data Encryption Standard) operation with a salt value of wC.

One issue with tokens is that they can be broken if attackers are able to build a dictionary compiling the correspondence between the original value and the token.

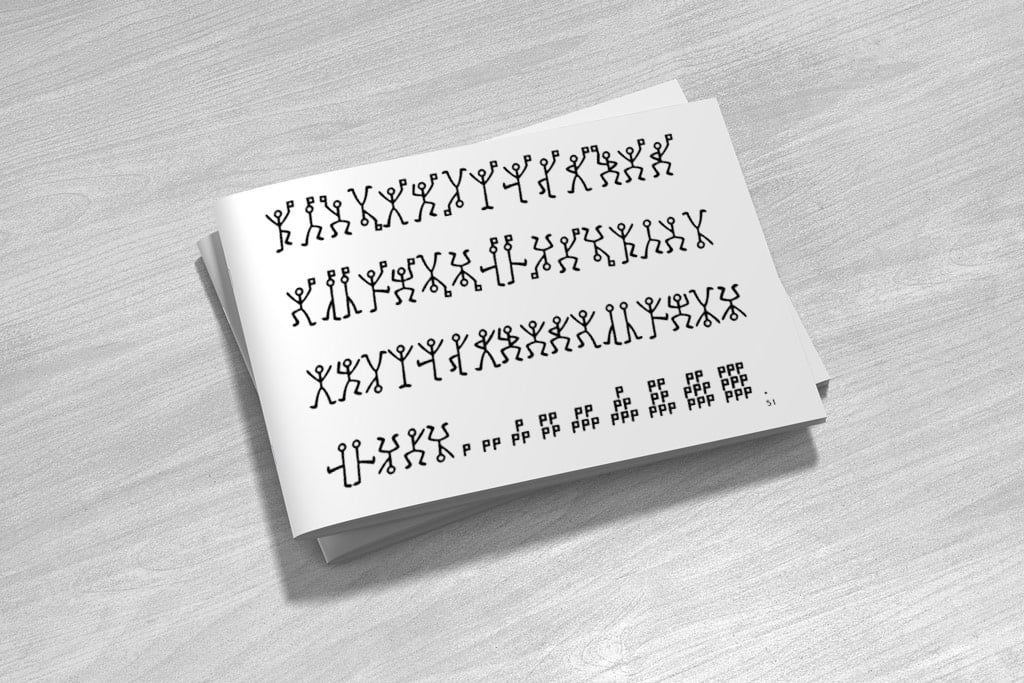

A token can also be a graphical/symbolic representation. For example, let us recall the “dancing men” from the famous Sherlock Holmes detective stories.

Each letter of the alphabet has been tokenized by a “dancing man” symbol. The original letters are not exposed and then there is no way to understand the meaning of the original text that is protected, unless that the corresponding dictionary is known.

In the banking industry, the PCI-DSS requirements usually command or recommend that credit card information, which is sensitive by nature, is tokenized or encrypted when stored in databases.

Tokenization versus Encryption

Tokenization can be seen as a form of encryption, where a big dictionary is created that links entries and tokens. Decryption is hardly possible or even totally impossible. The usage of that huge dictionary consists of looking at a symbol, then looking at an entry, and checking if the symbol represents the entry or not. It then differs from a normal encryption scheme that can decipher any symbol if given the right key. In tokenization, the “big” dictionary is the key itself and the only way to reverse tokens is to create the reversed dictionary, which is usually supposed to be impossible. But this is not always truly impossible. Recently, terabytes of “Rainbow Tables” have been created and can crack, for example, tokenized Windows passwords.

Note that “standard” cryptographic algorithms like AES or RSA can be effectively used to generate tokens, the goal being to map an entry to a token, and not consider decryption.

Finally and less obvious, “pure” random functions can be used to generate tokens. The correspondence between the information and the token representing it has been maintained in a dictionary. This is probably the best and ideal way to create tokens. For instance, for any information not already represented by a token, a new random 200 bits of data is generated and registered as a new entry in the dictionary.

Encrypted data can be deciphered, but the tokens must be unmapped to make sense, so they add obfuscation to the security.

Tokenization in the Banking Context

In the banking industry, tokenization has great importance. For instance, the PAN (Primary Account Number) is not to be exposed in databases. Therefore, a token/surrogate PAN is usually substituted to represent the PAN. For example, the following dictionary shows a conversion between some PANs and tokens.

|

|

Here the tokens do not preserve the original formatting. Tokens have better usage when they respect the like-to-like format rule. For instance a 16 digit PAN should be represented by a 16 digit token, eventually respecting the Luhn algorithm.

Visa has a strong tokenization requirement and so does the EMV consortium, as described in “EMV® Payment Tokenisation Specification”. Here are a few stories from the banking industry to illustrate the everyday use of tokens.

1) All EMV transactional data will be tokens

The actual trend in the EMV tokenization is that ALL transaction data will become tokens! Not just the PAN or related card data…

2) Combating CNP fraud

Card-not-present (CNP) fraud consists of using credit card data for fraudulent online “web” transactions. Such data consist of the PAN, expiration date, cardholder name and the CVV / CVC. These data are usually obtained by compromising unencrypted and unprotected databases merchant databases. Tokenization prevents merchants from storing card data but allows the storage of the token, which will be useless to attackers.

3) Securing card-on-file

Card-on-file is the process of collecting initial card data to store it and use it for recurring payments, for example. During the card-on-file process, tokens are requested and stored instead of credit card data. At each renewal period, the system will automatically send the tokens to the token gateway and charge the right account via de-tokenization.

4) Tokenization reduces false declines

A false decline (false positive) occurs when a genuine customer within their spend limit cannot place an online transaction. The reasons behind this are complex and the error messages are usually generic on purpose. It has been proved that Network EMV tokenization reduces such false positives.

Why Tokenization is Not Trivial

At first glance, one may believe that tokenization is not hard, that the concept is simple and an average programmer could create a hash function that will transform any sort of information into tokens. That is a big mistake! Here are the design issues that challenge a good token generation service:

- The token vault. The vault where the dictionary information/token is maintained must be secure. Otherwise, there is no real point in the whole tokenization!

- Collision-free. The tokens should be collision-free otherwise there will be a risk that the wrong account may be charged in lieu of the right one!

- Speed. The token generation and comparison in the vault should be fast.

- No “Rainbow Tables.” The dictionary information/token should not be reversed and an inverse dictionary should never be built.

- Quality of service. Tokens should not be able to be corrupted by hardware or electrical faults.

This is just an overview of the challenges created by a token generation service.

Conclusion

Tokenization is a major asset when considering data protection. It is not the same technique as encryption and must be considered to be used in addition to encryption in banking transactions. Tokenization is a very efficient way to prevent the leak of credit card data, especially PANs. On the other hand, it will never replace encryption. Tokenization is unable to create secure channels or provide authentication mechanisms but Tokenization is great for data protection.

References and Further Reading

- More articles on tokenization (2018 - today), by Martin Rupp, Dawn M. Turner, and more.

- More articles on Crypto Service Gateway (2018 - today), by Chris Allen, Jo Lintzen, Terry Allen, Rob Stubbs, Stefan Hansen, Martin Rupp, and more.

- EMV Payment Tokenisation Frequently Asked Questions (FAQ) – General FAQ (2017), by the EMV Consortium

- PCI DSS Applicability in an EMV Environment, A Guidance Document, Version 1 (5 October 2010), by the PCI Security Standards Council