2 min read

NIST's Post-Quantum Cryptography Standardization Process

Cryptomathic : modified on 11. February 2022

- Home >

- NIST's Post-Quantum Cryptography Standardization Process

Most current public-key cryptography (asymmetric) algorithms are vulnerable to attack from large-scale quantum computers. In its efforts to standardize post-quantum cryptography (PQC), NIST has begun the process of evaluating several PQC candidates in order to standardize one or more public-key algorithms that are quantum-resistant.

Expectations for New Public-Key Cryptography Standards

The envisioned public-key cryptography standards are required to be capable of protecting sensitive government information in the future, even after the arrival of quantum computing. These standards shall specify one or more additional unclassified, public-key encryption, publicly disclosed digital signature, and key-establishment algorithms that are globally available.

NIST so far ran through several rounds of evaluating candidates for quantum-resistant algorithms and shortlisted some of them after public evaluation and feedback.

NIST’s Iterative Standardization Process

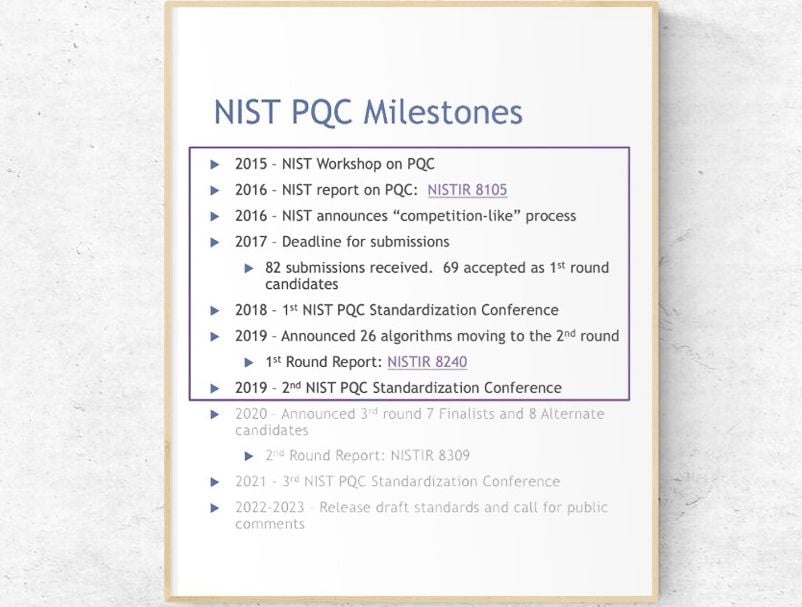

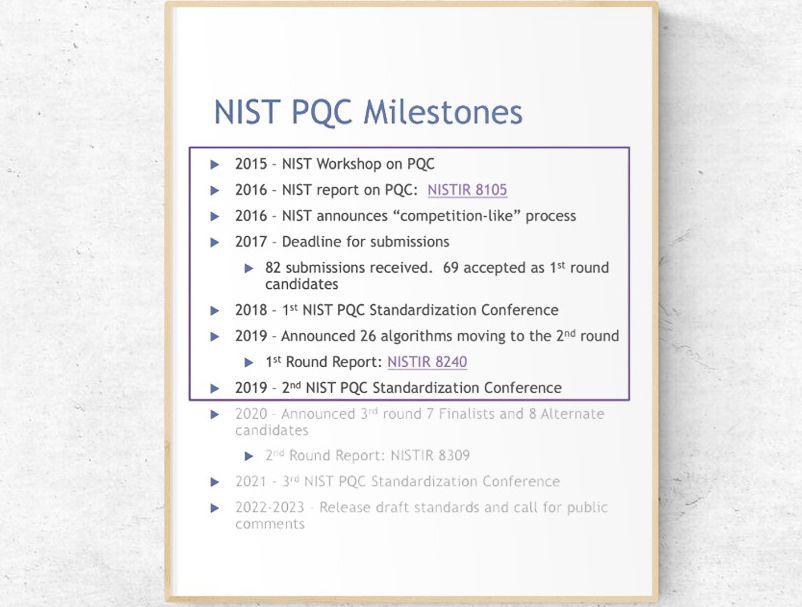

In 2016, NIST initiated a standardization process which led to so far 3 rounds of calls for proposals/submissions. NIST conducted cryptanalysis-based attacks testing the submitted algorithms. 69 Submissions were received in 1st round, of which 26 into the second round (announced in January 2019). Third round led to selection of 7 Finalists and 8 Alternates in July 2020. The latter will require more verification time and will be passed into a fourth round of analysis. NIST intends to publish standardization documentation by 2024.

Source: NIST

NIST’s process was to initially solicit public comment on submission requirements, and thereafter draft minimum acceptability requirements and evaluation criteria for candidate algorithms. The choice of algorithms can only be made based on current information, experience and theory. It is unlikely that these standards will remain static over a longer period of time, due to the continuous evolution of security requirements, and consequently of security standards, based on new evidence, breaches and research.

For example, in tests like the 2021 side-channel attack of the released algorithm FALCON, scientists displayed vulnerabilities, which "can be used to forge signatures on arbitrary messages”.

NIST’s process of standardizing and upgrading algorithms will be ongoing and dynamic. The standards for quantum-resistant cybersecurity algorithms will most certainly be much more short-lived than the pre-quantum algorithms, where many have lasted over several decades.

Preparing for a Post-Quantum World

PQC is expected to become commonplace within the next 10 years. During these 10 years, there will be changes in algorithms and standards. This will impose an architecture that embraces modifications that are fast and require minimal effort. We call such architectures crypto-agile.

PQC is expected to become commonplace within the next 10 years. During these 10 years, there will be changes in algorithms and standards. This will impose an architecture that embraces modifications that are fast and require minimal effort. We call such architectures crypto-agile.

For businesses, the time to prepare for a post-quantum world is now, before standards are issued by NIST. The first step is to determine what data is most attractive to cybercriminals. The systems with the most valuable data needs to take priority, as they will be the first target.

They might even be targeted now, where hackers could save unencrypted data and decrypt it when quantum computers are capable of doing so.

Strategies are needed around crypto-agile architectures guiding businesses on how to use supposingly quantum-resistant encryption based on what is known today and what data is at highest risk and then plan to upgrade their infrastructure for several years to come. As explained above, we cannot expect that standards will remain rock-steady. They will evolve over time and in unison with gained evidence and lessons learnt. Crypto-agility will allow to further develop architectures guided by new insights, standards and experience.

To stay ahead of threats, we invite you to talk with our experts about you can achieve crypto-agility with Cryptomathic’s Crypto-Service-Gateway, to automatically manage the evolution of algorithms and policies in parallel to the advent of PQC.

References and Further Reading

- Selected Articles on Quantum Cryptography (2017-today), by Dawn M. Turner, Rob Stubs, Terry Anton and more

- Selected Articles on Crypto-Agility (2017-today), by Dawn M. Turner, Jasmine Henry, Rob Stubs, Terry Anton and more

- Post-Quantum Cryptography Standardization (retrieved February 2022), by NIST Computer Security Resource Center

- Third PQC Standardization Conference - Session I Welcome/Candidate Updates (June, 2021), by NIST

- FALCON Down: Breaking FALCON Post-Quantum Signature Scheme through Side-Channel Attacks (2021), by Emre Karabulut and Aydin Aysu

- Post-Quantum Cryptography (retrieved 15.01.2022), by the NIST Information Technology Laboratory - Computer Security Resource Center

- NISTIR: Report on Post-Quantum Cryptography by the National Institute of Standards and Technology, April 2016.